What does the architecture of a production-grade talent intelligence platform actually look like? This post breaks it down layer by layer — from the user-facing channels all the way to containerized cloud infrastructure — covering the key design decisions that make such a system scalable, maintainable, and extensible.

Modern talent platforms face a unique intersection of engineering challenges: high-cardinality search across millions of candidate profiles, real-time recruiter dashboards, multi-channel data ingestion from ATS systems and social platforms, and the need to match job descriptions against resumes with semantic precision. The architecture we'll walk through addresses all of these concerns through deliberate, opinionated design choices.

01 — Application Architecture Overview

The platform is structured around four clean horizontal layers. Each layer builds strictly on the one below, with higher layers consuming services provided by lower ones — a discipline that keeps domain logic separate from shared infrastructure.

A critical design decision is the explicit split within Application Services between platform-specific components and reusable components (highlighted above). Taxonomy Manager, Connectors, Self-Registration, and Profiles are architected as standalone, reusable building blocks — meaning they could be extracted and deployed in a different product context without modification.

02 — Technical Architecture

At the core sits a Flask-based application server, Elasticsearch serving as both search engine and primary database, and a pluggable resume/job description parsing engine.

The Application Server

A Flask app serves as the central integration hub — handling all business logic, connecting outward to external systems (LinkedIn, Taleo, Jobscience and others), and downward to Elasticsearch for data persistence. The architecture is intentionally modular: the parser integration layer is explicitly marked Replaceable, meaning the parsing vendor can be swapped with minimal disruption to the rest of the system.

Data Flow at a Glance

/ Taxonomy

App Server

Index

Data

Storage

Elasticsearch as the Primary Database

This is the boldest architectural choice: the platform uses Elasticsearch not as a secondary search layer bolted on top of a relational database, but as the primary database. This works because talent matching is fundamentally a search problem — filtering candidates by skills, matching job descriptions to profiles, and navigating hierarchical taxonomies are all operations that map naturally to Elasticsearch's inverted index and full-text search model.

The tradeoff is real — ES lacks the transactional guarantees of a relational database — but for a read-heavy, search-intensive workload, the performance and scalability gains far outweigh the cost.

External Integrations

- Taleo

- Jobscience

- Other Sources (Adaptable)

Parsing & Dashboards

- Sovren Resume/JD Parser

- Parser Integration (Replaceable)

- Kibana Dashboards

- Raw File Storage (Azure Blob)

Kibana sits on top of Elasticsearch providing analytics dashboards for recruiters and executives. Crucially, raw resume and JD files are kept in separate blob storage — maintaining a clean separation between the processed, indexed representation of a document and the original artifact.

03 — Deployment Pipeline

A three-stage deployment model — Development → Staging → Production — fully containerized with Docker and orchestrated via Azure Kubernetes Service (AKS).

Development

Local development uses Docker Compose to orchestrate all services — the Flask app, Elasticsearch, and Kibana — in a coordinated local environment. A single docker-compose up replicates the full production topology, eliminating environment drift and dramatically reducing onboarding time for new engineers.

Staging → Production via Container Registry

Docker images built during staging are pushed to Azure Container Registry. Staging mirrors the production topology at reduced scale, serving as the gate for integration testing and UAT. Promotion to production involves deploying the same container images — no rebuilds, no surprises.

Production on AKS

Production runs on Azure Kubernetes Service within a VPN/NSG-protected network boundary. Two container groups form the production cluster:

Elasticsearch Containers

- Multiple ES nodes (horizontally scalable)

- Kibana on whitelisted IPs only

- Azure Disk persistent storage

- VPN/NSG protected boundary

API / Frontend Servers

- Flask + uWSGI app containers

- Nginx as reverse proxy

- Azure Load Balancer (public-facing)

- Horizontally scalable replicas

Elasticsearch is never directly exposed to the internet — only Kibana is accessible externally, and only to whitelisted IPs. API servers face the internet through an Azure Load Balancer, distributing traffic across multiple container replicas. This split-exposure model gives the analytics team access to Kibana while keeping the data layer completely private.

By containerizing every component — from the app server to Elasticsearch — the platform achieves environment parity across all three deployment stages. What runs locally is exactly what runs in production.

04 — Authentication & Security

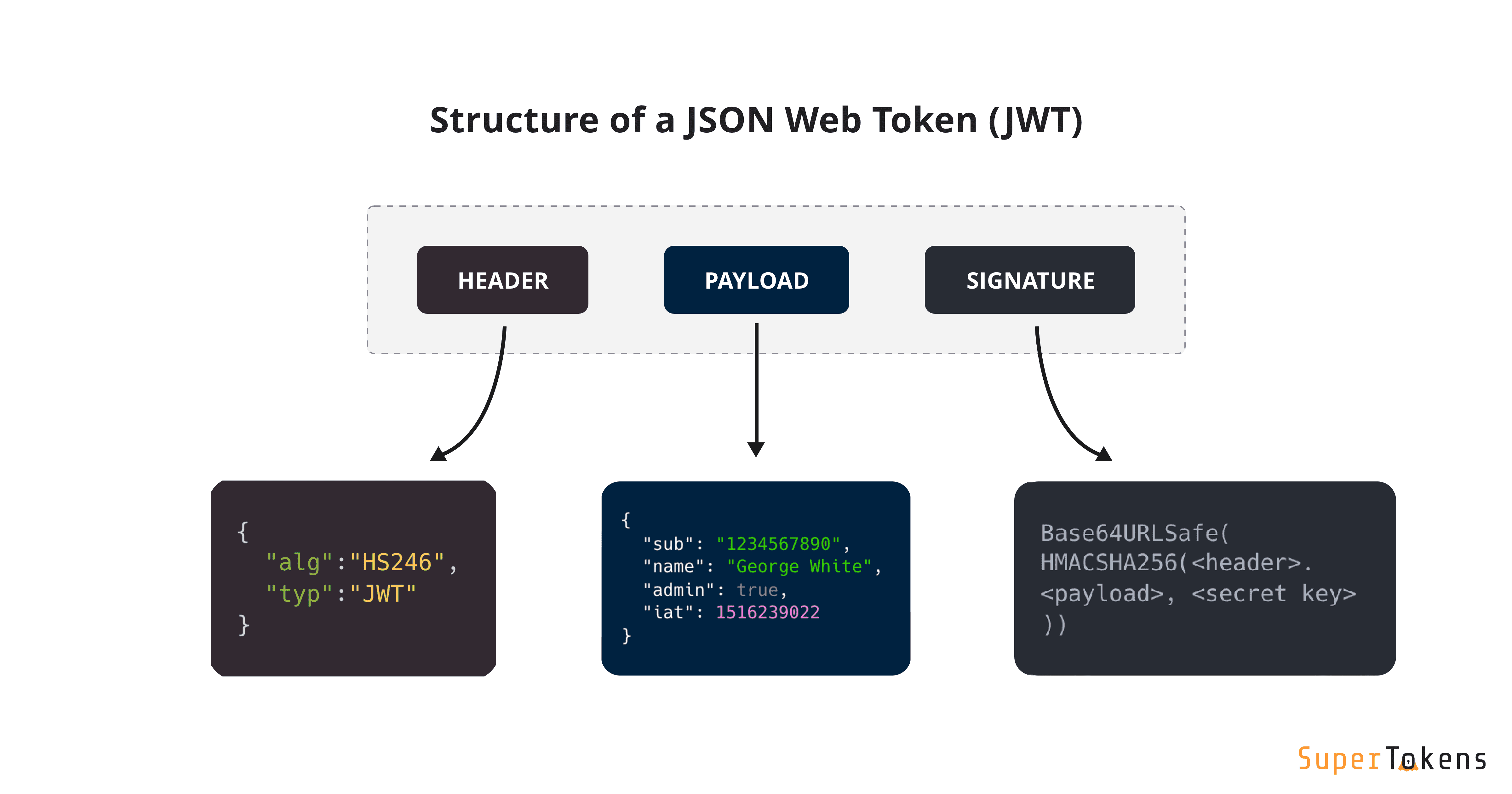

The platform supports username/password login and external OAuth (Google, LinkedIn, Facebook), issuing HS256-signed JWT tokens with configurable validity windows.

The JWT Flow

When a user authenticates — either directly or through an OAuth provider — the Flask app server validates the credentials and returns a signed JWT token. This token is stored client-side and sent with every subsequent request. The server validates the token on each request; no session state is stored server-side.

Token Structure

Tokens are signed using HS256 (HMAC-SHA256) with configurable validity windows. The payload carries four fields: expiration (exp), issued-at (iat), not-before (nbf), and an opaque identity string. Note that the identity field is an opaque ID — not a readable username or email — which avoids leaking PII into client-accessible payloads.

{

"exp": 1515044478, // token expiration timestamp

"iat": 1515044178, // issued at

"nbf": 1515044178, // not valid before

"identity": "AWC_qrkjqSWdEnD3N7H_" // opaque user ID

}

External OAuth

OAuth integration for Google, LinkedIn, and Facebook offloads credential management and MFA to trusted identity providers. When external auth is used, the OAuth provider handles the authentication flow; the app server consumes the returned profile data to provision or update the local account and issues its own JWT — maintaining a consistent token model regardless of auth method.

05 — Technology Stack & Configuration

Backend

- Python 3.6

- Flask 0.12.2

- uWSGI + Nginx

- Sovren (parsing engine)

Frontend

- React 16.2.0

- Single-page application

- Web + Mobile responsive

Data Layer

- Elasticsearch 6.1.1

- Kibana 6.1.1

- Azure Disk (ES persistence)

- Azure Blob (file backups)

Infrastructure

- Docker + Docker Compose

- Azure AKS (production)

- Azure Container Registry

- Ubuntu 16.04 (Docker host)

uWSGI + Nginx

Flask isn't production-grade as a standalone server, so the stack uses the battle-tested uWSGI + Nginx combination. Nginx handles TLS termination, static file serving, and reverse proxying; uWSGI manages Python application workers. This two-tier web server setup handles significant concurrent load with predictable behavior.

Azure Disk vs. Azure Blob

Storage is split by access pattern. Elasticsearch's WAL and index data live on Azure Disk (block storage), which provides the low-latency random I/O that ES requires. Raw resume and JD files go to Azure Blob — cheaper, geo-redundant object storage optimized for large binary objects. Right tool, right job.

06 — Key Design Principles

Several architectural patterns in this platform are worth carrying forward into your own designs:

Search-first data modeling. When your core workload is search — filtering, matching, ranking — consider whether Elasticsearch as your primary store makes more sense than adding it as a secondary layer on top of a relational database. The operational complexity is real, but so are the performance gains.

Replaceability by design. Marking integration points as "Replaceable" or "Adaptable" isn't just documentation — it's an architectural contract. It signals to every future engineer that these boundaries are extension points, not implementation details to be coupled against.

Reusable components as first-class citizens. Separating reusable components (Taxonomy Manager, Connectors, Profiles) from platform-specific ones means the next product built on this foundation gets those capabilities for free. This is how platforms scale beyond a single product.

Container-first, environment-parity deployment. Running Docker Compose locally and AKS in production with the same images isn't just convenient — it eliminates a whole class of "works on my machine" bugs and makes your CI pipeline a genuine quality gate.

Stateless authentication at scale. JWT-based auth with configurable validity windows is a clean, horizontally scalable approach. No shared session store means any app server replica can validate any request — essential when running multiple container replicas behind a load balancer.

The most interesting architectural bets here are using Elasticsearch as a primary database and containerizing everything from day one. Both are tradeoffs — but for a search-heavy platform deployed on cloud infrastructure, they're the right tradeoffs to make.